Buyer's Guide: Transport Protocols

This article appears in the February/March issue of Streaming Media magazine, the annual Streaming Media Industry Sourcebook. In these Buyer's Guide articles, we don't claim to cover every product or vendor in a particular category, but rather provide our readers with the information they need to make smart purchasing decisions, sometimes using specific vendors or products as exemplars of those features and services.

Streaming media may look like magic to the end user. However, for the content creator, there is a very tangible process that must be followed to make streaming video magically travel from the playout server to the viewer’s desktop.

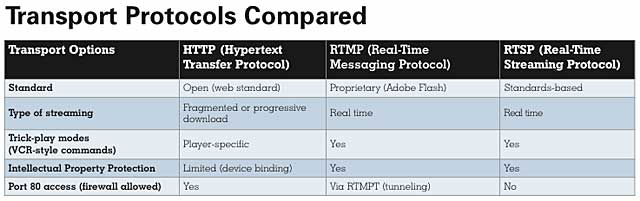

In other articles in this year’s Sourcebook, you’ll read about codecs and formats. But an equally important—and often misunderstood—delivery consideration is which protocol to choose to transport your video content over the internet. Three basic transport protocols are used for today’s streaming media delivery: HTTP, RTMP, and RTSP. Each protocol has its pros and cons and an appropriate streaming media application.

HTTP

Let’s start with the most broadly used transport protocol of them all: HTTP (Hypertext Transfer Protocol). Almost every web user is familiar with this protocol. It is the default protocol used by web browsers to move text, images, or entire webpages of content across the web from a server to the user’s desktop.

The good news with HTTP is that not all content has to reside in one place. Think of HTTP as the highway from a number of servers—one containing text, another images, and a third audio content—to a user’s desktop browser (the warehouse). It may be more cost-effective to send a big truck to each location to aggregate all the goods for delivery to the warehouse. The downside is that it takes a considerable amount of time (in IT terms) to do this.

Another HTTP advantage is that HTTP transport servers are more ubiquitous than RTMP or RTSP, given the sheer amount of content delivered by HTTP. This, in turn, makes HTTP cheaper and simpler to operate than other options.

This said, the HTTP one-size-fits-all approach is not necessarily the best choice for streaming media because live streaming media needs some form of priority over all other types of traffic. Since video and audio are very time-sensitive, they need to arrive on time and in a specific order to be of use to the viewer.

Unfortunately, HTTP is designed to deliver all the content from various locations in a nonparticular order; then, the code that’s part of the underlying webpage figures out where to place the content. That is why some content loads faster than others. The image server may be less busy than the text server, or vice versa, which affects which part of the page loads first. This approach can be a big problem if you are using HTTP to deliver streaming media.

Another issue with HTTP delivery is that it has historically required an entire file to be loaded into the browser before that file could be viewed. This has been addressed a variety of ways for images by using in-line loading and for audio by employing progressive download. However, video loading has been tricky due to the size of video files.

To remedy this shortfall, a number of solutions are available to break a large video file into a number of smaller files. For instance, content delivery network (CDN) operator Akamai fragments files into 10-second chunks. Rather than requiring an entire episode of a TV series to be delivered before playback begins, the receiving player can start viewing a segment as soon as the minimum number of segments has arrived. (Apple and Akamai both use 10-second chunks while Adobe and Microsoft use 2-second chunks.)

Of course, if the next segment is delayed, playback is also delayed. To offset this problem, other delivery solutions can opt to request a segment at a lower quality or resolution—and correspondingly requiring less bandwidth—to ensure smooth playback at a lower quality.

Now, HTTP file delivery is a best-effort, one-way process, and return communication is only possible after the file transfer has been completed. This is the reason for the fragmenting file approach; by breaking the video file into smaller segments, the HTTP-compliant video player can send back information after each chunk is received or after an elapsed time if a segment is not received. It can then request a retransmit of the same segment or a new segment at a different bitrate.

RTSP

In contrast, RTSP (Real-Time Streaming Protocol) is a “network control protocol” that is specifically designed to be used for delivering streaming media. Unlike HTTP, which works best when segments are sent in sequential order, RTSP allows the viewer to jump from one point of a stream to another point, including trick-play modes such as fast-forward or rewind, using VCR-like controls. Viewing can also begin the moment the first bits reach the RTSP player, meaning that a 2- or 10-second segment delay doesn’t affect RTSP delivery.

It’s also worth knowing that RTSP can support multicasting. You can use this protocol to deliver a single feed to many users, without having to provide a separate stream for each of them. HTTP cannot do this; it is a true one-to-one delivery system.

For these reasons, RTSP is often viewed as being synonymous with streaming media, while HTTP is seen as only being a file transfer protocol. While this is technically accurate, the HTTP fragmentation approach is so effective at smoothing out file transfer issues, the difference to the end user is almost indistinguishable.

RTSP is at the heart of streaming server systems such as QuickTime Streaming Server, Windows Media Services, RealNetworks’ Helix, Darwin (an open source version maintained by Apple), and even Skype and Spotify.

Adobe uses a variant of RTSP, a proprietary messaging protocol called RTMP (Real-Time Messaging Protocol) for its delivery from Flash Media Server (FMS) to users’ Flash Player in-browser playback.

RTMP

For people who plan to stream Flash video, Adobe FMS offers RTSP, HTTP, and RTMP options. Unless there is a specific reason to use RTSP, it makes sense to adopt the RTMP standard to ensure data flow will be fully compatible, from end to end.

RTMP, like RTSP, is defined as a stateful protocol. From the first time a client player connects until the time it disconnects, the streaming server keeps track of the client’s actions or “states” for commands such as play or pause.

When a session between the client and the server is established, the server begins sending video and audio content as a steady stream. This behavior continues and repeats until the server or player client closes the session. Recent advancements also accommodate for potential brief interruptions in the server-client connection, allowing for a small amount of content to be played back from a local buffer.

Encryption is another hallmark of RTMP, as RTMP encrypted (RTMPE) protects packets on an individual basis (more on this later). Some HTTP-based solutions are beginning to address integrated DRM, but the majority of HTTP delivery cannot support encryption at a packet level.

The two downsides of RTMP versus RTSP or HTTP are the need to download a plug-in, in the form of the Flash Player plug-in for popular web browsers, and the fact that most RTMP content is sent via the “nonstandard” Port 1935 rather than via the always-open Port 80, which is used for HTTP traffic.

Blurring the lines between HTTP and RTMP, however, is the tunneling feature in RTMP (called RTMPT), which allows RTMP to be encapsulated within HTTP requests. This allows RTMP to traverse firewalls by appearing to be HTTP traffic on Port 80.

What About MPEG DASH?

At first blush, one might think that choosing RTSP or RTMP would be the obvious choice for any streaming media application; it’s like choosing a race car to run races rather than choosing a truck. But, as it so often happens in real life, what seems obvious isn’t necessarily so.

The big objection to using RTSP or RTMP instead of HTTP is that RTSP and RTMP require a near-continuous link between the sending server and the viewer. In contrast, HTTP servers can cache content and then move it into place on web-based caches for delivery as needed. The viewer may not see a difference, but the person who has to pay for bandwidth will notice that the HTTP model uses less of this resource and, thus, costs less to support.

Add the fact that the MPEG DASH (Dynamic Adaptive Streaming over HTTP) standard is due for finalization, and the balance may tip further against RTSP and RTMP. MPEG DASH is designed to be a more open standard for adaptive streaming over HTTP, as opposed to the proprietary versions in use such as Microsoft Smooth Streaming, Adobe Dynamic Streaming, and Apple HTTP Live Streaming (HLS). If MPEG DASH catches on, it could encourage content producers to use HTTP instead of RTSP and RTMP for streaming. (For a rundown on MPEG DASH and what it means to streaming media, read “What Is MPEG DASH.”)

Then there is the access issue. Firewalls and proxies generally allow HTTP traffic to come in via Port 80. However, the same is not necessarily true for RTSP and RTMP, which use different connections. For instance, RTMP uses Port 1935, and many firewalls block remote access over that channel. (If this delivery path fails, Flash is configured to look for alternative ways in.)

With this said, RTMPT makes it possible to send RTMP feeds tunneled through an HTTP connection. This is achieved by sending the RTMP data wrapped in HTTP requests. This approach lets CDNs move RTMP data through Port 80 connections.

Meanwhile, RTSP and RTMP do have a number of other factors in their favor. First and foremost is the fact that they are designed for streaming media, with all that implies. In particular, the fact that RTSP and RTMP feeds provide true VCR-like controls in real time and start playing upon connection is a big plus with viewers.

The second big plus for RTSP and RTMP is security. Because the video is streamed rather than transferred, viewers don’t end up with files that they can hold onto afterwards. As was mentioned earlier in this article, Adobe offers a secured version of this protocol known as RTMPE (RTMP encrypted). As the name suggests, RTMPE provides real-time encryption during streaming for enhanced content protection.

In contrast, HTTP is a form of file transfer, which means the content ends up in viewers’ temporary files. Granted, it is possible to build in security measures to prevent piracy, such as file encryption, but the fact remains that viewers have the files in their possession. Now it is possible to provide protection by using Secure HTTP (HTTPS) for file transmission. Unfortunately, HTTPS has not been widely accepted as a form of DRM protection, which means that some content rightsholders may be unwilling to let licensees use it.

A third item in RTSP and RTMP’s favor is the amount of data they collect about users’ actions during video sessions. With these protocols, you can see how long viewers watched, when they fast-forwarded or disconnected, and what sections they viewed more than once.

The moral to the story is this: In the right circumstances, HTTP, RTSP, and RTMP can make sense as transport protocols. The question is which one makes the most sense for you.

To figure this out, talk with your tech people and representatives for any CDNs you are considering using. The point to pick up on is the performance of HTTP file fragmentation: If today’s technology is good enough for your needs, HTTP could be a more economical option. On the other hand, don’t decide strictly based on money, or you could end up short-changing yourself out of business.

Related Articles

We're growing the Streaming Media brand with Streaming Media Producer and an expanded Buyer's Guide section in this year's Streaming Media Industry Sourcebook.

13 Feb 2012